You open another job board tab, type “quality assurance internship,” and see the same pattern again. Old listings. Vague listings. Agency reposts. Roles marked remote that turn out to be hybrid after three interview rounds. By the time you find something real, it already looks crowded.

That frustration is normal. It also pushes a lot of good candidates toward the wrong conclusion, which is that entry-level tech hiring is random and inaccessible. It isn’t. But you do need a lane that gives you direct evidence of value, not just potential.

A quality assurance internship does that better than most entry points. It gives you a product to test, a workflow to learn, a team to support, and a trail of work you can point to in interviews. If you’re trying to break into software, switch from another field, or find a remote-friendly path that doesn’t require pretending you’re already senior, QA is one of the cleanest ways in.

Why a Quality Assurance Internship Is Your Smartest Career Move

The strongest reason to pursue a quality assurance internship is simple. It teaches skills companies can evaluate quickly.

Engineering managers may debate portfolios, credentials, and side projects. They rarely debate whether someone can reproduce a bug, write a clean defect report, think through edge cases, and communicate clearly with developers. Those are useful on day one.

The career math is also better than many people assume. The average salary for a Quality Assurance Internship in the United States is $37,029 per year as of October 2025, and 66.4% of interns overall secure full-time jobs post-internship, often with a $15,000 salary premium. The broader field is also expanding, with QA-related jobs projected to grow 15% from 2024 to 2034 according to Zippia’s quality assurance internship salary and outlook data.

That matters because QA isn’t just a temporary stop. It can lead into software testing, test automation, release quality, platform QA, accessibility testing, API testing, and product roles that reward careful thinking.

Why QA gives you leverage early

A lot of junior candidates try to compete on breadth. They list every tool they’ve touched and every tutorial they’ve finished. Hiring teams usually care more about whether you can operate inside a delivery process.

QA internships put you close to the actual work:

- You learn product behavior: You stop thinking in abstract “features” and start thinking in user flows, failure paths, and business risk.

- You build evidence fast: A bug report, a test case, and a regression checklist are concrete artifacts. They’re easier to discuss than “I’m passionate about tech.”

- You become useful across teams: Good QA interns help developers, product managers, support teams, and sometimes customer success because they spot problems before users do.

Practical rule: If you want a first role that rewards discipline more than self-promotion, QA is hard to beat.

The best part is that the barrier to entry is lower than many candidates think. You do not need to be an expert. You need to be observant, methodical, and coachable.

What You Will Actually Do as a QA Intern

A quality assurance internship is not just clicking around an app and saying whether it works. More accurately, the job involves learning how a team defines quality, then helping them verify it in a repeatable way.

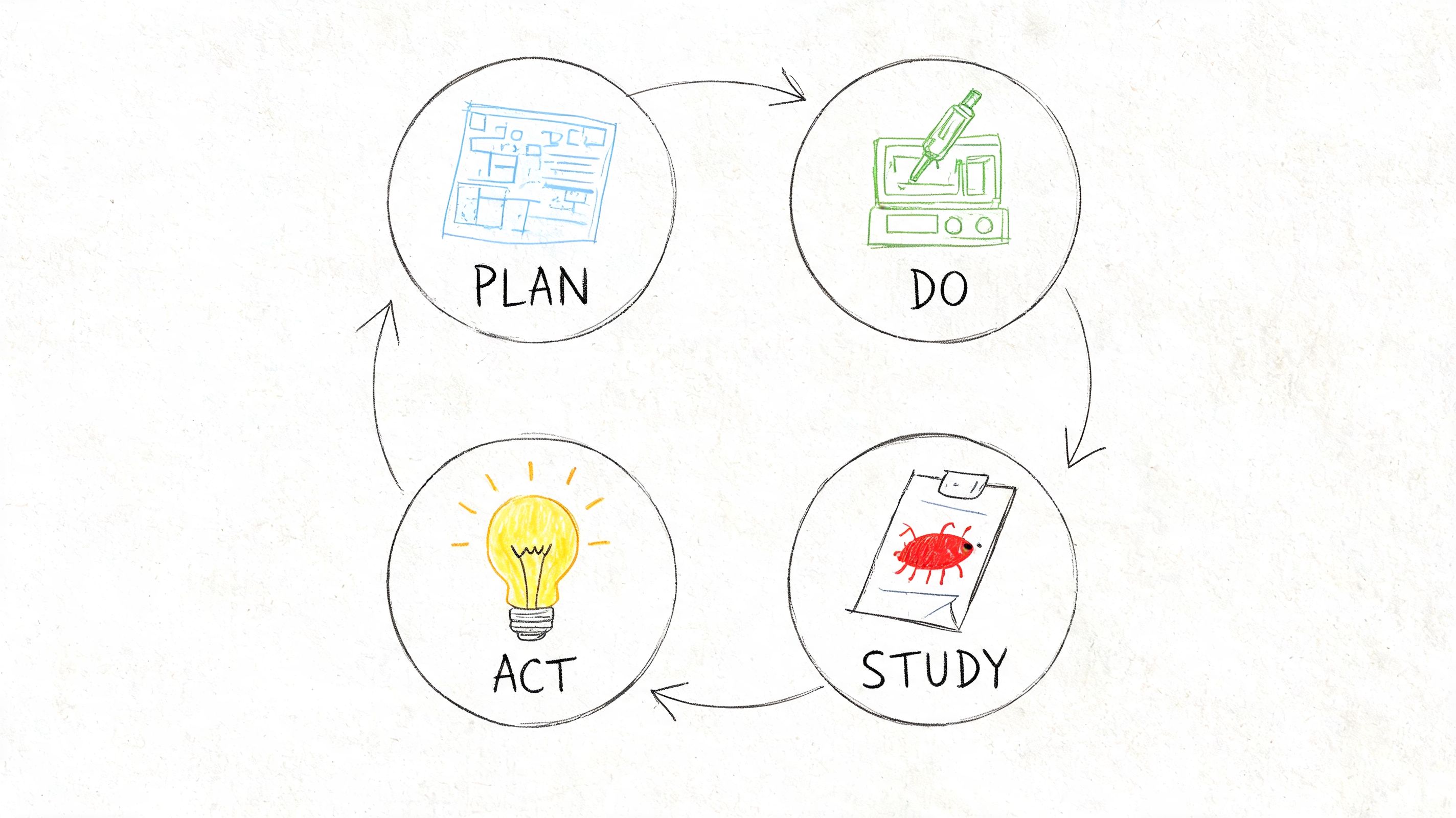

One of the most useful ways to understand the role is through the Plan-Do-Study-Act cycle. In a typical QA internship, you’ll apply that structure by planning measurable goals, running small tests, reviewing what happened, and improving the process. That approach has helped startup teams achieve 25-40% faster release velocities according to Parallel’s explanation of success-rate tracking and PDSA in QA work.

Plan

Junior candidates commonly underestimate the job. Strong QA starts before testing.

You’ll read requirements, acceptance criteria, tickets in Jira, and product notes in Notion or Confluence. Then you’ll translate those into things that can be checked. If a signup flow is changing, you won’t just note “test signup.” You’ll ask what happens with invalid email formats, expired links, duplicate accounts, weak passwords, mobile browsers, and interrupted sessions.

This is also where test cases come in. If you’ve never written them before, a practical test case creation guide is worth studying because it shows how to turn a vague feature into specific actions, expected results, and preconditions.

A QA intern who can think this way becomes useful quickly. You help the team catch ambiguity before code is even merged.

Do

Execution is the part people imagine first, but it’s more disciplined than it looks.

You might test a staging environment, validate a feature branch, or rerun a regression checklist after a bug fix. Some days that means manual testing. You use the app like a user would, then deliberately behave in ways normal users also do, but product teams forget to model. You refresh pages at awkward times. You paste malformed data. You switch browsers. You break assumptions.

Other days you’ll support automation work. At intern level, that often means reviewing existing Selenium or Cypress tests, helping update selectors, verifying test data, or documenting where automated coverage is weak. You don’t need to be an automation engineer on day one, but you should understand what automation is good at and what still needs a human eye.

Here’s the trade-off most juniors miss:

- Manual testing is better for exploratory work, usability issues, and weird edge cases.

- Automation is better for repeatable checks, regression coverage, and stable workflows that shouldn’t break release after release.

Good teams use both. Weak teams try to force everything into one bucket.

A QA intern stands out when they know when to explore and when to standardize.

Study

Testing is not finished when you find a bug. It’s finished when you can explain it so clearly that a developer doesn’t need a follow-up meeting to reproduce it.

This part of the internship teaches judgment. You’ll compare expected behavior to actual behavior, review logs or console errors when available, and decide whether something is a blocker, a minor defect, or just a misunderstanding of the requirement.

A useful bug report usually includes:

- Clear reproduction steps: Enough detail that someone else can follow them exactly.

- Expected result: What the system should have done.

- Actual result: What happened, without exaggeration.

- Evidence: Screenshots, screen recordings, environment details, or error messages.

- Impact: Why this matters to a user or release.

The discipline here matters more in remote teams because people can’t rely on hallway conversations to fill in missing context.

Act

The final part is adjustment. Maybe the bug report template needs cleaner severity labels. Maybe a flaky test should stay manual for now. Maybe a checkout flow needs a shared regression sheet before release day. Maybe your first version of a test suite missed obvious negative cases.

That’s normal.

What matters is whether you improve the next cycle. The strongest interns don’t chase perfection. They tighten the process every week.

What a real day often feels like

A practical quality assurance internship usually mixes several of these tasks in one day:

- Morning standup: Share what you tested, what’s blocked, and what needs retest.

- Ticket review: Read feature requirements and ask clarifying questions before execution.

- Hands-on testing: Validate a story in staging, then log defects in Jira.

- Retesting fixes: Confirm whether a resolved issue is resolved.

- Documentation: Update test cases, regression notes, or release checklists.

- Team communication: Sync with developers, product, or a mentor on risk areas.

If that sounds less glamorous than social media makes tech work look, that’s because it is. It’s also how real trust gets built.

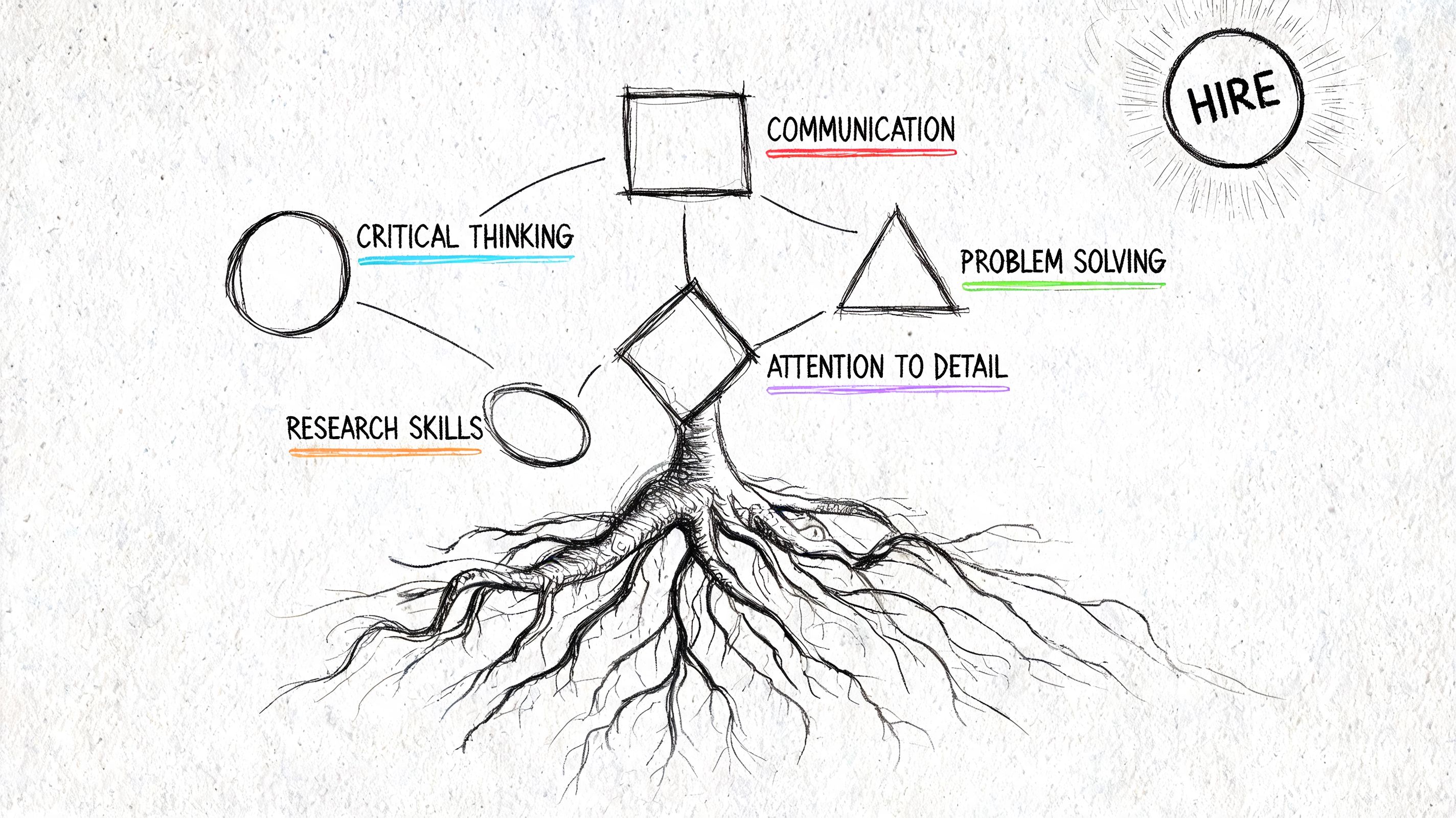

The Essential Skills That Get You Hired

The candidates who land a quality assurance internship aren’t always the ones with the most technical buzzwords. They’re usually the ones who can show reliable thinking.

That means two skill sets matter at once. First, you need enough technical fluency to understand how software behaves. Second, you need the human skills that make your findings useful to other people.

Technical must-haves

You do not need deep expertise across the whole stack. You do need a working toolkit.

Start with the basics most interns touch:

- Bug tracking tools: Jira is the most common example. Learn how to write issues cleanly, link tickets, and update status without creating noise.

- Test case management: TestRail, Zephyr, spreadsheets, or internal templates. The exact tool matters less than understanding traceability.

- Browser inspection: DevTools helps you inspect requests, console errors, layout problems, and network behavior.

- Web fundamentals: HTML, CSS, forms, cookies, sessions, and how browsers differ. You’re not expected to build the app, but you should know what you’re testing.

- API basics: Postman is a common starting point. Learn requests, status codes, headers, and how to validate responses.

- Automation concepts: Selenium, Cypress, or Playwright may show up in internships even if you only assist. Know what a locator is, why flaky tests happen, and when automation is worth the effort.

What doesn’t work is trying to memorize everything at once. Hiring teams can tell when a candidate has watched tutorials without connecting them to real testing work.

A better approach is to build one small practice flow. Pick a public demo app. Write test cases. Run them manually. Log defects. Then test a simple API endpoint in Postman. That sequence teaches more than a long list of disconnected certificates.

Career-making soft skills

Here, many strong candidates from non-traditional backgrounds gain an edge.

Soft skills like stakeholder management and staying calm under pressure can convert 2x better to full-time remote roles, and there has been a 15% rise in cross-functional QA hires in 2025-2026, according to Vanderlande career-related QA internship insights.

That tracks with what good remote QA requires. If your bug reports are sharp but your communication is sloppy, the team still loses time. If you find issues but panic during release week, people remember the panic.

The soft skills that change outcomes are often familiar, even if you didn’t learn them in tech:

- Attention to detail from retail or operations: Spotting mismatches, missing labels, and process gaps maps well to defect finding.

- Customer empathy from support roles: You quickly understand where user frustration starts.

- Written clarity from admin work: Remote QA lives in written updates, comments, and defect logs.

- Composure from hospitality or service jobs: Release pressure feels different, but calm execution translates directly.

- Prioritization from project coordination: Not every bug deserves the same urgency.

A remote team won’t promote you just because you work hard. They’ll promote you when your work reduces ambiguity for everyone else.

What hiring managers notice fast

Candidates often ask what separates the shortlist from everyone else. Usually it’s not some rare tool. It’s signal.

Hiring teams notice when you can:

- Explain a defect crisply

- Ask a better clarifying question than other applicants

- Show proof of structured testing

- Write like someone other people can collaborate with

If you’re pivoting from another field, don’t hide that background. Translate it. A former teacher can explain documentation discipline. A former coordinator can explain process ownership. A former support rep can explain user-centered thinking.

That’s not fluff. In many remote QA settings, it’s a hiring advantage.

Building Your Application Toolkit From Scratch

Most applicants make their materials too generic. They download a resume template, list tools they barely know, write “detail-oriented,” and hope volume wins. It usually doesn’t.

A stronger application toolkit is smaller, sharper, and easier to verify. If you have no formal QA experience yet, your goal is to prove that you already think like a tester.

Start with your resume. Don’t write it like a biography. Write it like evidence.

Resume bullets that sound like QA work

Even if your background is unrelated, you can frame experience around quality, process, and communication.

Here are better before-and-after patterns.

Instead of: Worked on student software project

Write: Tested user flows for a student web app, documented defects with reproduction steps, and collaborated with teammates to verify fixes before submission.Instead of: Customer service representative

Write: Resolved user issues through structured troubleshooting, documented recurring failure patterns, and communicated clear next steps across multiple channels.Instead of: Managed inventory

Write: Maintained accuracy across high-volume process checks, identified discrepancies early, and escalated exceptions before they affected downstream operations.

The point isn’t to dress up unrelated work. The point is to show the same habits QA teams rely on.

Build a small portfolio project

If you do one thing after reading this article, do this.

Pick a simple web application you can test without special access. A demo ecommerce site, sample login flow, or open-source app works well. Your portfolio doesn’t need polish. It needs proof that you can inspect software systematically.

Create one folder with:

- A short test plan: What you tested and what you didn’t

- A handful of test cases: Clear steps and expected outcomes

- A bug log: A few issues written professionally

- A summary note: What risks you found and what you’d test next

Keep the artifacts easy to open in Google Docs, Notion, or a public portfolio page. Recruiters won’t dig through a complicated repo for intern-level evidence.

A good starter bug entry includes title, environment, precondition, steps to reproduce, expected result, actual result, and evidence. That’s enough to show judgment.

Best portfolio rule: One finished QA sample beats five unfinished “learning” projects.

Your cover letter should do one job

A cover letter for a quality assurance internship should not retell your resume. It should explain why QA fits your thinking.

Keep it short. Mention one example of how you approach detail, process, or user experience. Then connect that habit to testing. If you’re changing careers, say so plainly and explain why the move makes sense.

Avoid overpromising. “I’m passionate about ensuring software excellence across scalable ecosystems” says nothing. “I enjoy tracing small failures back to the assumption that caused them” sounds like a tester.

After you’ve drafted your materials, it helps to see how other candidates talk through positioning and interview prep in practice:

Small details that lift your application

These aren’t glamorous, but they matter:

- Use a clean file name: Include your name and role target.

- Match the job language carefully: If the posting says defect tracking, regression, and API testing, reflect those terms truthfully where relevant.

- Link to proof, not profiles alone: A GitHub account with no QA artifacts is weaker than one public doc with actual test work.

- Check every line for specificity: If a sentence could fit any internship, tighten it.

A hiring manager should be able to look at your application and think, “This person already understands how QA work is documented.”

How to Find Vetted Remote QA Internships

The search itself is where many candidates burn out. Not because they’re unqualified, but because the market is noisy.

Remote QA internships are harder to find than general QA internships. Fewer than 5% of listings on major boards mention remote or hybrid options, and applicant success for remote roles can drop by 40% because strong openings are often hidden on company career pages instead of public boards, as noted in Indeed-related research on QA internship listing patterns.

That creates two practical problems. First, broad job boards make remote work look more available than it really is. Second, by the time a good listing spreads across aggregators and social media, you’re already late.

What doesn’t work anymore

Many job seekers still use a high-volume approach:

- Search a giant board

- Apply to everything labeled QA

- Hope the numbers eventually work out

That strategy wastes time in remote hiring. You end up sorting through reposts, low-signal listings, expired roles, and “remote” jobs that implicitly require office attendance.

Another weak move is relying only on LinkedIn Easy Apply. It feels productive because it’s fast. It often produces low-quality pipelines because everyone else can do it just as fast.

Remote internship searches reward precision more than volume.

What a better search process looks like

A stronger method is to search closer to the employer source and move quickly when a listing appears.

Here’s the process I recommend:

Prioritize direct employer listings

Company career pages tend to be more accurate than reposted listings.Filter for real QA language

Look for terms like regression, defect tracking, test cases, API testing, staging, release support, or automation exposure.Read location details carefully

“Remote” in the title can still mean regional restrictions or hybrid expectations in the description.Check recency

Newer listings usually mean less applicant pile-on and fewer dead links.Apply with a relevant proof asset

A portfolio sample or concise bug log matters more when the field is smaller and more targeted.

Why direct sourcing gives you an edge

A dedicated remote job engine offers utility. Instead of depending on recycled aggregator listings, use a source that pulls roles directly from employer career pages. That helps you avoid stale listings, ghost jobs, and recruiter clutter.

A practical option is Remote First Jobs, which focuses on direct-sourced remote roles from company ATS and career pages. For job seekers who are tired of crowded public boards, that kind of sourcing matters because it improves listing quality and helps you spot openings earlier.

That speed matters more in internships than people think. At entry level, good candidates often look similar on paper. Freshness becomes a differentiator. If your application lands before a role gets flooded, your portfolio and writing have a better chance of being read.

What to look for in the listing itself

When you do find a remote quality assurance internship, read for signs of a healthy team:

- Clear responsibilities: Testing scope, documentation expectations, and collaboration details are spelled out.

- Specific tools: Jira, Postman, Cypress, Selenium, TestRail, or internal QA workflows are mentioned.

- Real environment context: The listing explains what product, team, or release process you’ll support.

- Direct-hire signals: The role sits on a real company page and points to an actual team.

If the description is vague, overstuffed, or reads like a generic recruiter template, treat it cautiously. A good internship usually sounds like a real team asking for real help.

Nailing the Interview and Your First 90 Days

Getting the interview means your application cleared the first filter. Now the team wants to know whether you can think through problems in a calm, structured way.

QA interviews at the internship level usually aren’t trying to trap you. They’re checking whether you can observe carefully, communicate clearly, and stay grounded when you don’t know something yet.

Common QA internship interview questions and answer strategy

| Interview Question | What They’re Really Asking | Answer Strategy |

|---|---|---|

| How would you test a login page? | Can you think beyond the obvious happy path? | Walk through valid login, invalid credentials, password reset, account lock behavior, session handling, browser differences, and basic usability. Show structure. |

| Describe a bug you found | Do you understand impact and communication? | Pick a specific example. Explain the context, steps, expected result, actual result, and why it mattered to users or release quality. |

| What makes a good bug report? | Will developers be able to work with you? | Emphasize reproducibility, clarity, environment details, evidence, and concise impact. |

| How do you prioritize bugs? | Can you judge risk instead of reporting everything the same way? | Separate severity from priority. Explain user impact, release risk, frequency, and business context. |

| What’s the difference between manual and automated testing? | Do you understand trade-offs? | Explain that manual testing is stronger for exploration and usability, while automation helps with repeatable regression checks. |

| What would you do if you couldn’t reproduce a reported issue? | How do you handle ambiguity? | Say you’d confirm environment details, gather more context, check logs or evidence, and avoid dismissing the issue too quickly. |

| How do you handle disagreement with a developer? | Can you collaborate under pressure? | Focus on the requirement, user impact, and evidence. Keep the discussion on behavior, not personalities. |

| Why QA? | Is this a deliberate choice or a backup plan? | Give a direct reason tied to how you think. Observation, process, product quality, and user experience are strong anchors. |

How to answer without sounding rehearsed

The biggest mistake candidates make is trying to sound advanced. Don’t pretend you’ve led full release cycles if you haven’t. Interviewers can tell.

Instead, use a simple structure:

- Situation: What were you testing?

- Task: What were you responsible for?

- Action: What did you check, document, or ask?

- Result: What changed because of your work?

That framework keeps your answer grounded. Even student projects or self-made portfolio samples work if the details are real.

If you’re early in your career, honesty plus structure beats fake expertise every time.

Questions you should ask them

A QA interview is also your chance to test the team.

Ask questions that reveal process quality:

- How are requirements documented before testing begins?

- What does a strong bug report look like on your team?

- How does QA work with developers during a sprint?

- What parts of testing are manual right now, and what parts are automated?

- How will success be measured for this internship?

Those questions do two things. They show maturity, and they help you avoid internships where nobody has thought through how to support a junior tester.

Your first 90 days on the job

Once you’re in, your work changes. You’re no longer proving potential. You’re building trust.

One of the most practical habits in your first months is metric tracking. In your first 90 days, focus on key indicators such as test case execution rate with a target of 98%, defect leakage to production with an aim below 5%, and your contribution to automation coverage, based on Group107’s QA process guidance for tracking impact.

That doesn’t mean obsessing over dashboards on day one. It means learning how your team defines useful output.

Days 1 through 30

Your first month is about orientation and reliability.

Learn the product before you try to impress people with edge cases. Read old tickets. Review release notes. Ask which workflows break most often. Understand the environments. Figure out where test data lives. Learn the team’s definition of done.

In remote settings, this is also the time to tighten your note-taking. You’ll hear expectations in standups, bug triage, sprint planning, and release calls. If that’s a weak point, it’s worth learning how to master meeting note taking so action items don’t vanish after a call.

A good first-month checklist looks like this:

- Study the product: Know the core user journeys and failure-prone areas.

- Mirror team conventions: Match bug report style, ticket hygiene, and communication habits.

- Ask narrow questions: “Should this error be blocking?” is easier to answer than “Can you explain the whole feature?”

- Close loops: If someone answers your question, apply it and confirm the update.

Days 31 through 60

This phase is about contribution. You should start handling repeatable testing work with less supervision.

That might mean owning part of a regression suite, documenting a new feature flow, or supporting retest cycles after bug fixes. You should also start noticing process friction. Maybe the same defect categories keep returning. Maybe test data setup wastes time. Maybe acceptance criteria are too vague before stories move into QA.

Write those observations down. Don’t turn them into speeches. Turn them into useful suggestions.

Days 61 through 90

By this stage, the question shifts from “Can they do the work?” to “Would we trust them with more?”

Documentation and impact tracking matter. Keep a private log of what you tested, what issues you found, what got fixed, what regressions you caught, and where you improved a team habit. Those notes make review conversations easier and help you argue for a return offer or full-time path.

A strong third-month pattern includes:

- Owning a slice of quality: A feature area, a recurring release checklist, or a test suite segment

- Communicating risk early: Flagging uncertainty before deadlines, not after them

- Improving one process: Cleaner defect templates, better retest notes, or better test case organization

- Showing consistency: Fewer reminders, sharper updates, steadier judgment

The interns who get remembered aren’t always the loudest. They’re the ones whose work other people can rely on.

A quality assurance internship can be the start of a serious career if you treat it that way. Learn the product. Write clearly. Test with intent. Keep score of your work. That combination travels well from internship to full-time role, especially in remote teams where clarity is part of performance.

If you’re tired of applying through noisy boards and want a cleaner path to real remote opportunities, Remote First Jobs is worth using. It pulls roles directly from employer career pages, filters out the usual spam and dead listings, and helps you spot remote openings before they get buried under mass applications. For QA internship seekers who want verified listings and a better shot at being early, that’s a real advantage.